high energy physics experienced through real-time audio

high energy physics experienced through real-time audio

NOTE: AS OF DECEMBER 2018, THE LHC HAS ENTERED A 2 YEAR PLANNED SHUTDOWN. PLEASE CONSULT QUANTIZER SOUNDCLOUD AND ACADEMIC PAPERS

Methodology

For a detailed summary of the project, consult the project's NIME conference paper, ICHEP outreach paper and CHI extended abstract.

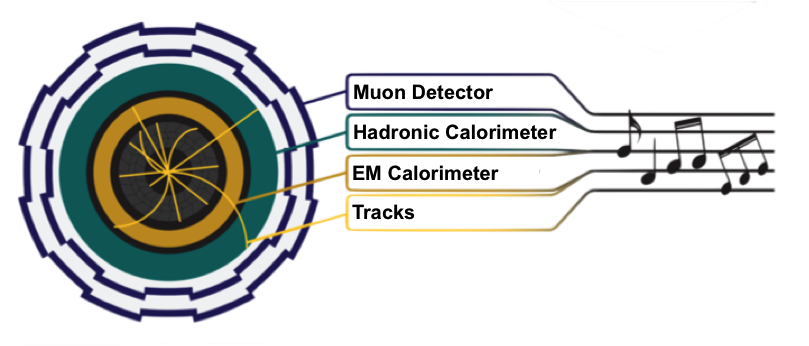

A tiny subset of collision data from the ATLAS Detector is being generated and streamed in real-time into a sonification engine built atop Python, Pure Data, Ableton, and IceCast. The sonification engine takes data from the collision event, scales and shifts the data (to ensure that the output is in the audible frequency range) and maps the data to different musical scales. From there, a midi stream triggers sound samples according to the geometry and energy of the event properties. The composer has a lot of flexibility regarding how the data from the collision is streamed with respect the geometry of the event, and can set whether or not to discretize the data into beats. The data currently being streamed includes energy deposit data from the hadronic endcap calorimeter and the liquid argon calorimeter, as well as energy from particle tracks and location information from a muon trigger (RPC). You can learn more about the different layers of the ATLAS detector here. Each event currently produces ~30 seconds of unique audio. Some composers have gone further and built their own patches in Max MSP or their own web apps using the processed data streaming out of Python.This platform provides physics enthusiasts with a new way to experience real-time ATLAS collision data, and musical composers a new way to play with it. It provokes questions about how audio might serve a more practical role in data analysis.

Audio is an effective tool for communicating information. A smoke detector warns of potential fire and a stethoscope helps a medical doctor determine the rhythms of a patient’s heart. Audio is also used when monitoring physics experiments to give information about the status and quality of the equipment and data. For example, an audio cue alerts control room workers when the particle beams in the Large Hadron Collider are dumped.

Sonification has developed into an active area of research. (ICAD Conference). As technology advances, more complicated and subtle information can be communicated using audio, even in real-time. The Quantizer platform allows researchers to sonify and communicate more complex particle physics information to its listeners.

The strength of the Quantizer platform lies in how users can compose aesthetically pleasing music with ease. This gives composers the chance to learn about the physics in the ATLAS data by trying to creatively make use of some of the underlying patterns. Those patterns, if highlighted by the composer in the audio, can then also teach listeners about the physics properties of the collision event.

Composing a musical piece that plays by itself for hours on end without any musician interaction/intervention is a slightly complicated goal; you cannot as easily force separate verses, a chorus, and solos. With these two pieces I focused on music styles where large amounts repetition is common and embraced. There is no beginning, no climax, and no end, just the simple variations of the journey.

Only four parts of the ATLAS detector's data was used to compose the House piece. The guitars are predominantly controlled by two parts of the ATLAS calorimeters. Their rhythms are human composed but the musical notes are mostly determined by the data. The freestyle organs come from the track and RPC data. The minor percussive elements are not dependent on the data but help to fill out the sound. I invite a drummer to overlay a more interesting drum track; to play along with the data from world's highest energy particle accelerator.

One of the major differences between these two pieces and other sonifications is the imposition of timing, and especially the imposition of a beat. Many sonifications let the data determine as many aspects of the music as possible and this often results in music structures that sound very random. There is actually a huge amount of timing structure to the LHC and ATLAS data imposed by the experimenters so I have imposed some timing structure too. Also, this timing takes sonifications from a world of experimental sounds to the more mainstream styles of music.

The ATLAS particle physics data has a lot of patterns in them but the data can seem random to the untrained eye / ear. When composing the House piece I used the non obvious physics patterns in the data to produce some patterns in the music. For example the most probable energies are more closely aligned with the root notes of the music scale. This means that the music will more likely return to a pleasing home-base music note at the end of the bar rather than constantly leaving the listener hanging. Only a small number of physics patterns in the data have been exploited in these two pieces and I look forward to pushing that further in future compositions.

Partial details on how the data is turned into music for the House stream:

Approximate detector locations:

- Pink: Tracks detector

- Light green: LAr Calorimeter

- Dark green: HEC Calorimeter

- Purple: RPC

- Grey: Other detector components that are not currently being used for sonification

The different types of data are played by multiple different instruments (using Ableton Live instruments):

- RPC = Funky organ, Organ5 Slow decay

- Tracks = Funky organ, Organ5 Slow decay

- LAr = Grand piano

- HEC = Electric1 basic bass, Please rise for Jimi, Woofer loving bass, strangler (guitar)

A tom drum is also played but is in not connected in any way to the data.

- step 1: Clean and filter the data. Only use the parts of the event that have high enough energy and momentum and are in the central region of the detector.

- step 2: Filter the data even further by requiring the data to lie between specific minimum and maximum values (e.g. enforce a minimum momentum on each track). This ensures that the data can be properly scaled for sonification. These are very loose selections to avoid imposing too many restrictions on the composer.

- step 3: Split the detector up into bins of "pseudo-rapidity" (eta), a special angle used in particle physics and partially depicted in the above gif. Associate all of the data to a nearby eta bin. The musical notes will be played in the order as defined by eta positions going from negative eta to positive eta.

- step 4: Stream all the data associated to the single eta bin from python to Pure Data. Do this for each eta bin.

- step 5: Apply a finer selection to the data based on the energies and positions. These selections can be changed in real-time by the composer in a more DJ-like fashion.

- step 6: Scale the data from their allowed ranges into the allowed musical midi note ranges. For example, a track’s momentum could be required to be between 20 GeV and 1000 GeV. The track momenta are scaled into the musical midi note range of 60 to 90.

Midi note ranges:- RPC: 60-96

- Track: 60-90

- HEC: 48-60

- LAr: 36-48

(Middle C on a piano is 60) - step 7: Set the duration of the notes and determine if it is allowed to be played on this beat as set by the composer’s beat structure.

Musical note durations:- HEC: 1/2 beat

- LAr: 1/2 beat

- RPC: 3s = almost 3 bars (exact duration depends on the selected instrument)

- track: 2s = almost 2 bars (exact duration depends on the selected instrument)

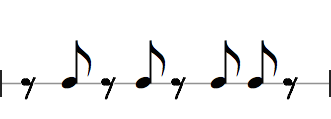

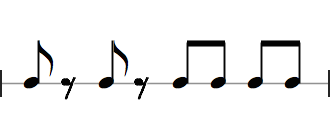

The musical beats for the LAr, HEC, and the drums are forced to follow the following patterns: LAr Beats

LAr Beats HEC Beats

HEC Beats Drum Beats

Drum Beats - step 8: Round the midi note value to the nearest allowed midi note value as defined by the scale.

- Midi note musical note scale: 0,3,5,7,10,12

- [Equivalent to musical note scale: C, Eb, F, G, Bb, C]

- step 9: Output the musical notes as sound using the instruments listed above.

Note that between events, the HEC and LAr (and drums) will continue the same rhythm while just repeated the same music note that was last played. This helps transition from one event to the next in a more pleasing manner.

This work attempts to provide a musical metaphor for the collisions and subsequent energy showers that happen in the ATLAS detector using spatial cues to reflect the geometry of each event. Each collision event begins with the colliding protons approaching from the left and right. As particles initially carrying all of the energy of the reaction, they approach with a sound like a truck. At the moment of collision, when they meet in the center, a wavefront of sound begins, made of filtered noise and choir reflecting the total energy of the event and the missing momentum. On top of this ambient sound, each layer of the detector chimes in from the center outward. First, particle tracks warble across the space. Then, calorimeter energy deposits register around the space. Finally, bright plinks emanating from the periphery reflect muon detection by the RPC.

____________Technical Overview

- step 1: Physics data is selected based on desired properties of the data (see House stream explanation for details)

- step 2: The first few seconds of audio are driven by a quantity that probes the total amount of energy measured in the event as well as a quantity that indirectly probes the amount of undetected energy. These data properties are streamed first and are used to control certain characteristics of a choir/filtered noise wavefront audible at the beginning of each event

- step 3: Next, the remaining physics information is streamed from the innermost detector layer to the outermost layer (first tracks, then calorimeter energy, then muon candidate hits, using a 5:2:2 time ratio for the total 25 seconds of audio for the event). For each layer, physics information is streamed purely with respect to an angular coordinate. This means that the timing of the data is entirely controlled by the detector geometry. There is no beat structure imposed, in contrast to the other two live streams.

- step 3a: The track data is spatialized, meaning that the audio is distributed between each of the two speakers to reflect the directions the particles are traveling. (Note that the starting position of each track is manually shifted by the composer to create the auditory effect of the tracks passing through the listener’s head). This effect is more easily audible when listening to a version of the stream with a higher number of channels/speakers. Since only some geometric coordinates are available in the data, information about the track momentum is used to calculate the duration of each track’s auditory representation.

- step 3b: Next, the calorimeter energy deposits trigger sounds timed by their location in the detector

- step 3c: Finally, the muon detector hits trigger sounds timed by their location in the detector

Artist Statement: Ewan Hill (Piece inspired by He's a Pirate) Only four parts of the ATLAS detector's data were used in this piece. The main rhythm is controled by the user and is inspired by a melody in the Pirates of the Caribbean score. Four bars of notes are played before the rhythm repeats. This is predominantly controlled by two parts of the ATLAS calorimeters and the musical notes are largely determined by the data. To help reset each phrase of four bars of music, the root note of the piece is played at the end of the last bar independently of the data. The track and muon detector hit data provide the occasional accent. The instrumentation is made up of an ensemble of strings, brass instruments, and woodwinds, as well as a grand piano, and a timpani. A musical note generated by the data is transposed (within Ableton Live) up and/or down by one and/or two octaves. Those few notes are all played together, which helps to expand the sound without designing separate bass line from a melody. Originally this piece was composed with the intention of being played live with other musicians but was modified to play solo for this website. The intention was to write a piece that had a different time signature from my previous pieces (3/4 instead of 4/4), make use of the updated software for a full phrase of music before the rhythms repeat, and to test out other features within Ableton Live. In many other respects, the piece transforms the physics data into musical n otes in a very similar way to that done in my previous pieces.

- Pink: Tracks detector

- Light green: LAr Calorimeter

- Dark green: HEC Calorimeter

- Purple: RPC

- Grey: Other detector components that are not currently being used for sonification

Approximate detector locations:

HEC Energy, LAr Energy, RPC eta, track momentum The different types of data are played by multiple different instruments (using Ableton Live instruments):

- step 1: Clean and filter the data. Only use the parts of the event that have high enough energy and momentum and are in the central region of the detector.

- step 2: Filter the data even further by requiring the data to lie between specific minimum and maximum values (e.g. enforce a minimum momentum on each track). This ensures that the data can be properly scaled for sonification. These are very loose selections to avoid imposing too many restrictions on the composer.

- step 3: Split the detector up into bins of "pseudo-rapidity" (eta), a special angle used in particle physics and partially depicted in the above gif. Associate all of the data to a nearby eta bin. The musical notes will be played in the order as defined by eta positions going from negative eta to positive eta.

- step 4: Stream all the data associated to the single eta bin from python to Pure Data. Do this for each eta bin.

- step 5: Apply a finer selection to the data based on the energies and positions. These selections can be changed in real-time by the composer in a more DJ-like fashion.

- step 6: Scale the data from their allowed ranges into the allowed musical midi note ranges.

- step 7: Set the duration of the notes and determine if it is allowed to be played on this beat as set by the composer’s beat structure.

- step 8: Round the midi note value to the nearest allowed midi note value as defined by the scale.

-

Quantizer + Al Blatter live in concert at the 2015 Montreux Jazz festival : The Physics of Music and the Music of Physics. Special thanks to Ellen Walker, our first groupie !

-

Prerecorded clip from the "Spacey" track using masterclass data that was played at Montreux Jazz Festival: The Physics of Music and the Music of Physics. Composition by Evan Lynch

-

Happy pop rock: Improvised with minor post-recording edits. Dedicated to Hayley Thompson.

-

Suitar samba: Improvised with minor post-recording edits. Many thanks to Gina Lupino and Kirk Elliott for helping us get a basic samba beat going. Apologies to Gina and Kirk for what Ewan did to the samba piece after you left. ;)

-

Funky: Improvised with minor post-recording edits.

-

Strings: Improvised. Needs to be edited.

-

Experiment on a blues scale: Improvised. Really needs some editing... For Ewan's dad.

-

What the Quantizer music sounded like in May 2015:

Audio Sample Files

Here are some prerecorded audio files generated from ATLAS data on the platform.

More About ATLAS Experiment

The ATLAS experiment is pushing the frontiers of knowledge by investigating some of the deepest questions of nature: what are the basic forces that shape universe, are there extra dimensions, and what is the origin of mass? The ATLAS Detector is one of two general-purpose detectors built along the Large Hadron Collider at CERN. The Large Hadron Collider, a 27 km long machine, smashes together particles at amazingly high-energies and the ATLAS detector precisely measures the properties of the particles produced in these collisions. Like a complicated game of connect-the-dots, special software automatically reconstructs the trajectories of the particles ("tracks"). Different detector components serve different tasks, including the identification of particles and reconstruction of their momenta or energy. From the inside out, they include tracking detectors, calorimeters, and a muon spectrometer. Powerful magnets curve the trajectories of charged particles, to make it possible to measure their momenta.

Want to learn more about how to interpret the event display above? Check out this article

Contacts and Acknowledgements

This platform was built as a collaboration between Juliana Cherston (Responsive Environments Group, MIT Media Lab) and Ewan Hill (UVIC, TRIUMF, ATLAS)

We wish to thank the ATLAS experiment for letting us use the data and for their continued support. Thank you to Media Lab composers Evan Lynch and Akito van Troyer for developing some of the creative custom synthesizers and mappings used in this project. Thank you to CERN for the invitation to perform at the Montreux Jazz Festival, and to Al Blatter for his performance collaboration. Thank you to Ableton Live for their support. Thank you to Domenico Vicinanza (Department of Computing and Technology - Anglia Ruskin University, and GEANT Association) for providing design feedback in the project's early stages as well as helping to present the project at different venues, including organising the ICAD workshop. Finally, Thank you to Felix Socher for sharing some of his ATLAS data parsing code. Finally, thank you to Fernando Fernandez Galindo and the atlas-live team for their data streaming assistance.